*This is really not meant for an introduction, although there is some, but rather a reference of links and reminder for people who already know some of the basics (ie me!). I have been busy researching high-dimensional forecasting, and this is part of the result.

A time series is any series of numbers that occur sequentially in a specific frequency: 25 degrees today, 27 degrees tomorrow, 17 degrees the day after that… Primarily with such data, we are interested in forecasting what that series will be in the future. Forecast demand for a product. Forecast weather. Forecast the stock market…

Forecasting is one of the few areas machine learning is quite capable, but the tools are not as consistently brought together as, say, sklearn in Python or caret in R for machine learning. This is somewhat understandable, as it is a specific use case with specific transformations and assumptions to check. ‘Time Series’ loosely spoken often refers to methods of forecasting where you don’t use any external data to model the series – you just use the patterns in that series to predict in the future. More advanced methods also can include outside information alongside as additional variables.

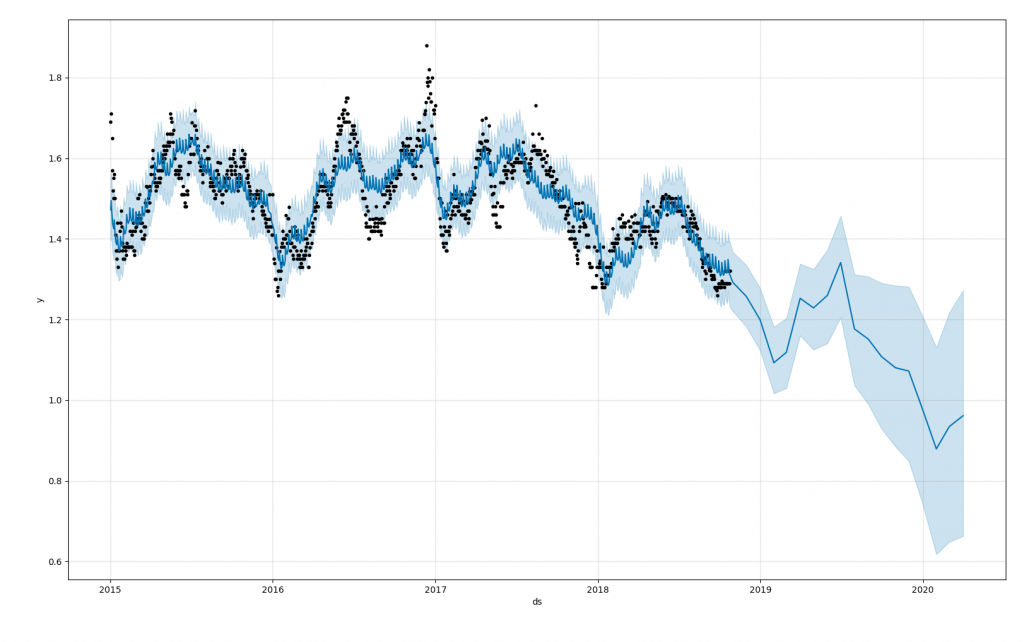

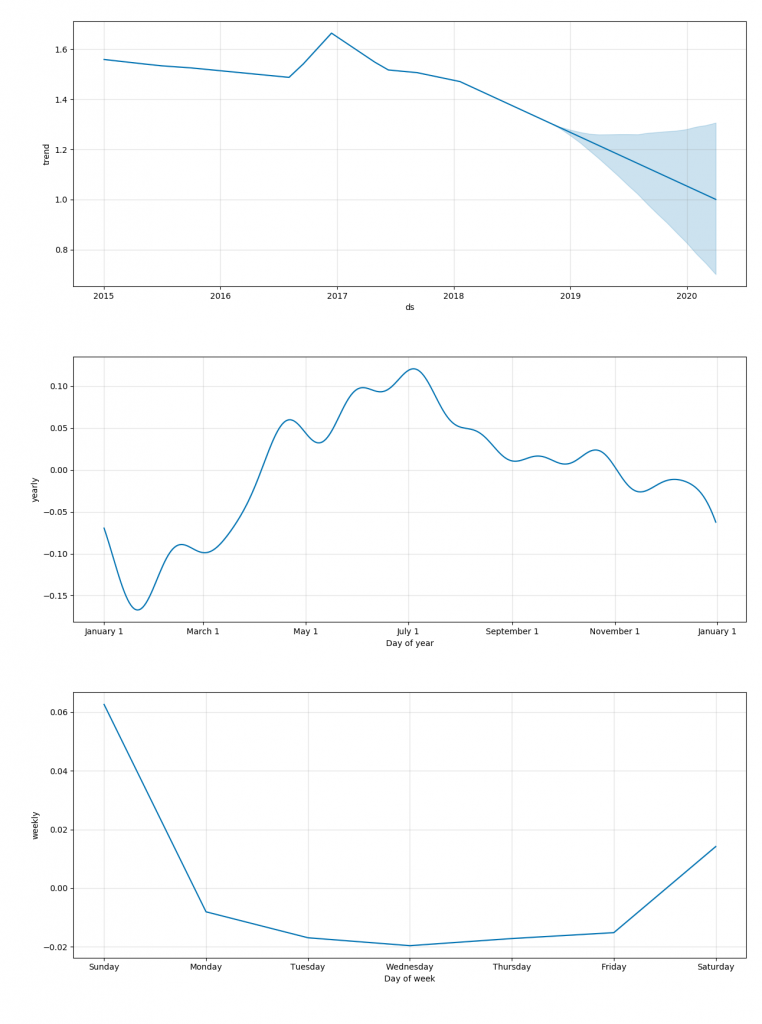

My default (and many programmer’s) is Facebook’s Prophet which can be used in R or Python, and allows great flexibility with good speed, accuracy, and simplicity. Deep Learning RNN’s are the most accurate in advanced cases, but are harder to put into production, and may not be worth any minimal gains, or they fail to converge as I’ve also seen. ARIMA is the king of old-school methods, but ETS is usually faster and more accurate in my experience.

Types of Time Series

- Naïve Times Series Methods (forecast every future day as the mean, forecast every future day as the most recent known value, etc)

- Easy, fast, and often surprisingly accurate

- Always used as a benchmark for other methods

- Traditional Time Series Methods (AR, ARMA, ARIMA, ETS aka Holts-Winters, Theta)

- `forecast` package in R (best) and `statsmodels` & `pmdarima` in Python

- Newer Time Series Methods (Prophet)

- Prophet is always the first method I try, usable in R and Python

- Framing as Regression problem (creating features (ie day of week, lags) then outcome, not time order sensitive)

- Regular ML packages like `sklearn` in python and `caret` in python, and many more

- Cross validation can be used in a ‘time series’ (windowed) format for more consistency

- Can be great, but usually requires lots of creative feature engineering to perform well, as well as additional information for more variables

- Your outcome (Y) is the time series value you care about, then all your variables (X’s) are whatever you can dig up related to that particular time (it’s day of week, stock market value at that time, whatever)

- Deep Learning (Recursive Neural Nets – LSTM, etc)

- Keras – build your own LSTM or GRU, but lots of work for a single time series

- GluonTS – new to me, quite intriguing higher level API for training on many related series and rapidly predicting. Pretty sure this requires lots of related series at once, to perform well, but is great for that use case.

- Usually use lots of training time, and are only occasionally better than statistical methods.

- These don’t generalize well, is the big problem, and to be super accurate they need a lot of hands-on tuning time. GluonTS seems to be an exception to this in the models, when applicable.

- Ensembling (ie combining multiple models)

- Most accurate

- Mixing machine learning with the traditional statistical methods had the best results at the M4 competition

- Comb was the benchmark at the M4 competition: Comb, a combination based on the simple arithmetic average of the Simple, Holt and Damped exponential smoothing models. The best, most elaborate model only beat this by 10% in the M4 competition. Interestingly, I have previously found a similar simple ensemble (prophet + ets) worked very well for price forecasting.

- Hierarchical & Grouped Time Series

- `forecast` in R, apparently common in commercial forecasting suites

- Handles large groups of related time series that can then be aggregated, such as in demand forecasting (product category, product sub-category, then unique product)

- GluonTS also fits here

- PyAF seems the best there is for Python, but less mature than R

Usually the simple place to start:

Packages (beyond R’s `forecast` and Python’s `statsmodels` which are by far the most solid and documented)

https://github.com/alan-turing-institute/sktime # in dev

https://github.com/awslabs/gluon-ts

https://deepai.org/publication/gluonts-probabilistic-time-series-models-in-python

https://github.com/antoinecarme/pyaf

https://github.com/CollinRooney12/htsprophet

https://github.com/ineswilms/bigtime

Open Source Auto-ML with Time Series options:

https://github.com/uber/ludwig

(with regression methods, of course, many AutoML toolkits can be used such as TPOT, auto-sklearn, h2o, etc)

https://github.com/MaxBenChrist/awesome_time_series_in_python

High-Dimensional Time Series References:

https://otexts.com/fpp2/hierarchical.html (R is still the easiest and most developed place to do forecasting)

Papers:

https://eng.uber.com/m4-forecasting-competition/

https://arxiv.org/pdf/1704.04110.pdf

https://ideas.repec.org/a/for/ijafaa/y2012i26p20-26.html

https://robjhyndman.com/papers/Hierarchical6.pdf

https://robjhyndman.com/publications/mint/

https://aws.amazon.com/blogs/opensource/gluon-time-series-open-source-time-series-modeling-toolkit/

http://proceedings.mlr.press/v89/gasthaus19a/gasthaus19a.pdf

https://arxiv.org/pdf/1711.11053.pdf

Stack Overflow:

https://stats.stackexchange.com/questions/371295/training-one-model-to-work-for-many-time-series